Are you interested in

creating games for Windows Phone 7 but don't have XNA experience?

Watch the recording of the recent intro webinar delivered by Peter Kuhn '

XNA for Windows Phone 7'.

This article is part 5 of the series "XNA for Silverlight developers".

This article is compatible with Windows Phone "Mango".

Latest update: Aug 1, 2011.

User input on a mobile device is always an interesting topic, because it's usually so much different from normal desktop applications on a conceptual level already. You have no pointing device like a mouse, which eliminates some possibilities altogether (hovering or right-click, for example), but on the other hand an all new class of features is added through modern technology, like multi-touch gestures and additional sensors (like accelerometers).

I've already talked about the theory and reasons of "event-driven vs. polling" regarding input in the first part of the series, and I encourage you to take another look at this paragraph to brush up on the topic once again. This article will mostly skip over these theoretical parts and take a closer look at how you actually make use of user input and dive into the involved code.

Silverlight

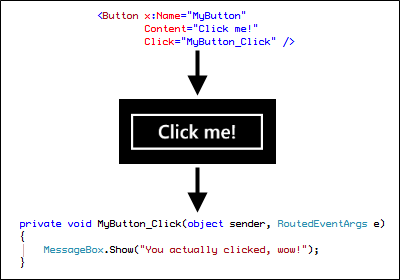

A Silverlight app on the phone very much works like the desktop version regarding input. You usually have some sort of control that exposes various user interaction events. You then add event handlers to those events you're interested in, and when the user action happens, your event handler is invoked automatically by the runtime. The following shows the button click event as an example.

The crucial part is not the way you add your event handlers, i.e. whether you use XAML to do that or code-behind. You might even use a completely different approach, for example in an application designed with the MVVM pattern where you use commands instead of events. The important detail is that your application is in the passive role here. It just sits there, waits and does nothing until something of interest happens. It reacts to events the runtime manages and pushes towards you.

This is even true if you are not interested in input events for a certain control, but want to integrate with e.g. gestures. To do this you would use the gesture listener provided by the Silverlight Toolkit for Windows Phone. It raises events you can listen to whenever gestures are detected, hence it features the same programming model for this scenario too. If you want to learn more about the gesture listener in Silverlight, you can read one of my blog posts were I give more explanations and a sample with source code.

XNA

In XNA the fundamental concept of how you acquire user input is completely different. All of a sudden, your code is in the active role and has to ask the runtime about user input. If you don't ask, you'll never take notice. One of the central classes for input in XNA on the phone is TouchPanel. It is a static class that only has a hand full of properties and methods which basically let you set up the input features as well as retrieve all input data that is available through the touch screen of a device. The required steps are:

- Tell the touch panel what kind of input you are interested in (this is a one time thing or needs only be changed when you want to be notified of different input data in different contexts, see below).

- Ask the touch panel if input data is available (you have to do this regularly/in each frame).

- Retrieve and process the data.

Additionally you can ask the touch panel for its capabilities, but since all Windows Phone 7 devices report the same feature set, that currently is of little use on this platform. Let's see how this translates into code:

Enabling gestures

Enabling gestures is very simple. There is a gesture type enumeration with the different types of gestures that are supported on the device, and you can pass one or more of these values to the static "EnabledGestures" property of the touch panel. This is something you typically do in your game class constructor for simple games; in most projects however you will work with a concept of different "screens" (for example for menus, the actual game, supplementary content like high-scores etc.). In these cases you can re-initialize this property for each screen that is shown to the user to optimize for only those gestures that you actually need in a particular context.

// enable gestures

TouchPanel.EnabledGestures = GestureType.Tap | GestureType.Flick;

Tap is the simplest gesture available and denotes that the user briefly touches a single point on the screen. You can think of it as the touch counterpart of a mouse click. When you look at the other enumeration values you can see that all of the common gestures are available:

- Tap, DoubleTap, Hold: involve touching a single point on the screen and are usually used to invoke some action like "clicking" or selecting something.

- HorizontalDrag, VerticalDrag, FreeDrag, DragComplete: These are the gestures that are a combination of touching and dragging on the screen. You can use these to move objects around the screen, or for scrolling, panning and similar actions.

- Pinch, PinchComplete: Explained as "two-finger dragging" in the documentation. This is normally used for features like zooming.

- Flick: A special gesture that is positionless. It describes quick swipes on the screen that often are used for flipping through items or pages etc.

Reading gestures

You have to check for available gesture data in each call of the "Update" method in your game, or you might miss gesture data. The following code shows how to do this to retrieve individual GestureSample objects which contain the actual details about the detected gesture:

// check whether gestures are available

while (TouchPanel.IsGestureAvailable)

{

// read the next gesture

var gesture = TouchPanel.ReadGesture();

// has the user tapped the screen?

if (gesture.GestureType == GestureType.Tap)

{

// move the sprite to the tap position

_position = new Vector2(gesture.Position.X - _sprite.Width / 2.0f,

gesture.Position.Y - _sprite.Height / 2.0f);

}

else if (gesture.GestureType == GestureType.Flick)

{

// add a fraction of the flick delta (= velocity) to the position

_position += gesture.Delta * 0.1f;

// make sure the sprite doesn't move off screen

var viewPort = _graphics.GraphicsDevice.Viewport;

var min = new Vector2(-_sprite.Width / 2.0f, -_sprite.Height / 2.0f);

var max = new Vector2(viewPort.Width - _sprite.Width / 2.0f, viewPort.Height - _sprite.Height / 2.0f);

_position = Vector2.Clamp(_position, min, max);

}

}

You can ask the touch panel for available gestures using the "IsGestureAvailable" property. Please note that multiple gesture samples can be available, which is why I'm using a while loop here to make sure I retrieve all of them. Next you retrieve the gesture sample by calling "ReadGesture" on the touch panel. From there, you simply check for the gesture type that was detected and process the data accordingly.

As you can see, all the different gestures work with the same data structures. But not all the properties of a gesture sample are meaningful for every gesture type. You have to refer to the above linked documentation to decide what pieces of information really make sense for a certain gesture. For example, in the above code I use "Position" for the tap and the "Delta" property for flicks; since flicks are positionless, you cannot use the "Position" property with them.

Conclusion

This sample shows how the major difference between XNA and Silverlight regarding input translates to code, and how your game actively has to ask for input data instead of receiving that information through events.

The attentive reader notices another detail here. Where you can target a particular element in Silverlight and have notifications send to your application only for this element, the XNA approach is way more generic. The filtering for useful information has to happen in your application. That means that once you have the information that the user has touched the screen, you have to decide yourself if this is of any use for you, for example if the location the touch panel has reported belongs to some element on the screen that should react to user input. In Silverlight, if a click event like above happens, you already know that it belongs to the button and what action to invoke as a result, and you also will never receive that button click event when the user clicks outside the button bounds.

A simplified approach

What surprisingly many XNA developers do not know is that there's a simpler way to get access to input touch data for simple scenarios through the Mouse class. Even though there's no such thing as a mouse on the phone, the library developers have mapped the primary touch point data to what corresponds to the left mouse button on the desktop. This means that if you're only interested in tap data, you can use something like this in your Update method:

// get the "mouse" state

var mouseState = Mouse.GetState();

// the left button press is equal to touching the screen

if (mouseState.LeftButton == ButtonState.Pressed)

{

// move the sprite to the "mouse click" position

_position = new Vector2(mouseState.X - _sprite.Width / 2.0f,

mouseState.Y - _sprite.Height / 2.0f);

}

Note that the "Pressed" state of the left mouse button is the only constellation where this class returns meaningful data for the X and Y coordinates. More details on this can be found here. Since this data is also updated when the user moves their fingers around the screen, you can even create simple dragging functionality using this approach.

Taking a deeper dive

Working with gestures already is an abstraction of what actually happens with the touch panel. Flicking or dragging is only an interpretation of a series of low-level touch samples that happen over time to make it easier for developers to work with these common gestures. I you want, you can make use of these low-level samples for your own scenarios. Why would you want to do that? Well, every Windows Phone 7 touch screen is capable of handling up to four touch points at the same time. The built-in convenience wrappers for gestures only make use of up to two points (pinching). So digging deeper into the touch input features allows you not only to create your own gesture types, but also to make use of this fact and create new and innovative input concepts that go way beyond the usual. Let's see how that works.

In your "Update" method, call the "GetState" method on the touch panel to retrieve a collection of touch locations. Each of these locations has the following properties:

- Id: This value is very important for the tracking of successive samples that belong to the same logical touch action. Each time a finger touches the screen, a new id is generated. This id will stay the same for all of the following touch samples of this finger until the user removes it from the screen. Because you will retrieve multiple touch locations when the user puts multiple fingers on the screen, this is the value to determine which touch locations belong to the same finger over a period of time.

- Position: This obviously is the position of the touch point as 2D vector.

- State: One of the possible touch location state enum values. New touch locations have a state of "Pressed", locations that were existing before have "Moved". Please note that you'll receive "Moved" location info even if the finger has not really moved physically. "Released" happens when the finger is removed, and "Invalid" should only occur when you try to use the TryGetPreviousLocation method and there is no such previous location.

Here is some sample code that makes use of touch locations. First the "Update" method:

// clear previous locations

_touchLocations.Clear();

// get the raw state of the touch panel

var touchCollection = TouchPanel.GetState();

foreach (var touchLocation in touchCollection)

{

// we are only interested in pressed (= new)

// and updated (= moved) locations

if (touchLocation.State == TouchLocationState.Moved

|| touchLocation.State == TouchLocationState.Pressed)

{

// store this location for the draw method

_touchLocations.Add(touchLocation);

}

}

Then, in the draw method, I simply output the number of recognized touch locations and one sprite for each of them (including a text overlay that prints out the id).

// draw number of touch locations

_spriteBatch.DrawString(_font, "Number of touch locations: " + _touchLocations.Count, Vector2.Zero, Color.White);

foreach (var touchLocation in _touchLocations)

{

// calculate position of sprite

var position = new Vector2(touchLocation.Position.X - _sprite.Width / 2.0f,

touchLocation.Position.Y - _sprite.Height / 2.0f);

// draw sprite

_spriteBatch.Draw(_sprite, position, Color.Blue);

// draw id

_spriteBatch.DrawString(_font, touchLocation.Id.ToString(), position, Color.White);

}

Of course working with multiple touch locations makes most sense on a real device, not in the emulator.

If you want to develop your own gestures, you have to collect these samples and try to interpret them as they occur, according to your requirements. This however is way beyond the scope of this article.

Summary

In this part we have learned about the various possibilities of touch input on Windows Phone 7 in XNA. You can use the simplest method of the mouse analogon for simple situations, like menu screens, or go all the way down to collecting low-level touch samples to create a multi-touch input application. In between those extremes, you have access to pre-built gestures that allow a more comfortable access to most common input requirements. You can download the source code to the three above demonstrated samples here:

Download source code

Please feel free to add your comments and thoughts, or contact me directly with any questions you have.

About the author

Peter Kuhn aka "Mister Goodcat" has been working in the IT industry for more than ten years. After being a project lead and technical director for several years, developing database and device controlling applications in the field of medical software and bioinformatics as well as for knowledge management solutions, he founded his own business in 2010. Starting in 2001 he jumped onto the .NET wagon very early, and has also used Silverlight for business application development since version 2. Today he uses Silverlight, .NET and ASP.NET as his preferred development platforms and offers training and consulting around these technologies and general software design and development process questions.

He created his first game at the age of 11 on the Commodore 64 of his father and has finished several hobby and also commercial game projects since then. His last commercial project was the development of Painkiller:Resurrection, a game published in late 2009, which he supervised and contributed to as technical director in his free time. You can find his tech blog here: http://www.pitorque.de/MisterGoodcat/